The currently measured 80% throttling level is way too high to reflect the true situation of this app. It is throttled for only 20ms * 4 = 80ms total-just a fraction of the 400ms CPU run time. But clearly, this second app suffers far less than the first app. With the current way of measuring the throttling level, it will arrive at the same percentage: 80%. It will then be completed in the fifth period. A task with 400ms processing time will run 80ms and then be throttled for 20ms in each of the first four enforcement periods of 100ms. Consider a second container application that has a CPU limit of 800m, as shown below. There is a significant bias with this measurement. In the above example, that would be 4 / 5 = 80%. The old/biased way: Period-based throttling measurementĪs mentioned at the beginning, we used to measure the throttling level as the percentage of runnable periods that are throttled.

Therefore, the total throttled time is 60ms * 4 = 240ms. In the fifth period, the request is completed, so it is no longer throttled.Ĭontainer_cpu_cfs_throttled_seconds_totalįor the first four periods, it runs for 40ms and is throttled for 60ms. It is throttled for only four out of the five runnable periods. Linux provides the following metrics related to throttling, which cAdvisor monitors and feeds to Kubernetes: Linux MetricĬontainer_cpu_cfs_throttled_periods_total Overall, the 200ms task takes 100 * 4 + 40 = 440ms to complete, more than twice the actual needed CPU time: This repeats four times for a 200ms task (like the one shown below) and finally gets completed in the fifth period without being throttled. That means that the app can only use 40ms of CPU time in each 100ms period before it is throttled for 60ms. The 400m limit in Linux is translated to a cgroup CPU quota of 40ms per 100ms, which is the default quota enforcement period in Linux that Kubernetes adopts. There it is a single-threaded container app with a CPU limit of 0.4 core (or 400m). If you watch this demo video, you can see a similar illustration of throttling. The new/unbiased Way: Time-based throttling measurement.The old/biased way: Period-based throttling measurement.In this post, we will show you how this new measurement works and why it will correct both the underestimation and the overestimation mentioned above: In this recent improvement, we measure throttling based on the percentage of time throttled. That resulted in sizing up high-limit applications too aggressively as we tuned our decision-making toward low-limit applications to minimize throttling and guarantee their performance. With such a measurement, throttling was underestimated for applications with a low CPU limit and overestimated for those with a high CPU limit. Prior to this improvement, our throttling indicator was calculated based on the percentage of throttled periods. In this new post, we are going to talk about a significant improvement in the way that we measure the level of throttling. Not only can we expose this silent performance killer, Turbonomic will prescribe the CPU limit value to minimize its impact on your containerized application performance. Turbonomic visualizes throttling metrics and, more importantly, takes throttling into consideration when recommending CPU limit sizing. As illustrated in our first blog post, setting the wrong CPU limit is silently killing your application performance and literally working as designed. Please let us know if we are missing some configuration in the above screenshot.It has been a year and a half since we rolled out the throttling-aware container CPU sizing feature for IBM Turbonomic, and it has captured quite some attention, for good reason. Suggested Backoff Time: 68448 millisecondsĪt .(AbstractEmailProcessor.java:328)Īt .(AbstractEmailProcessor.java:381)

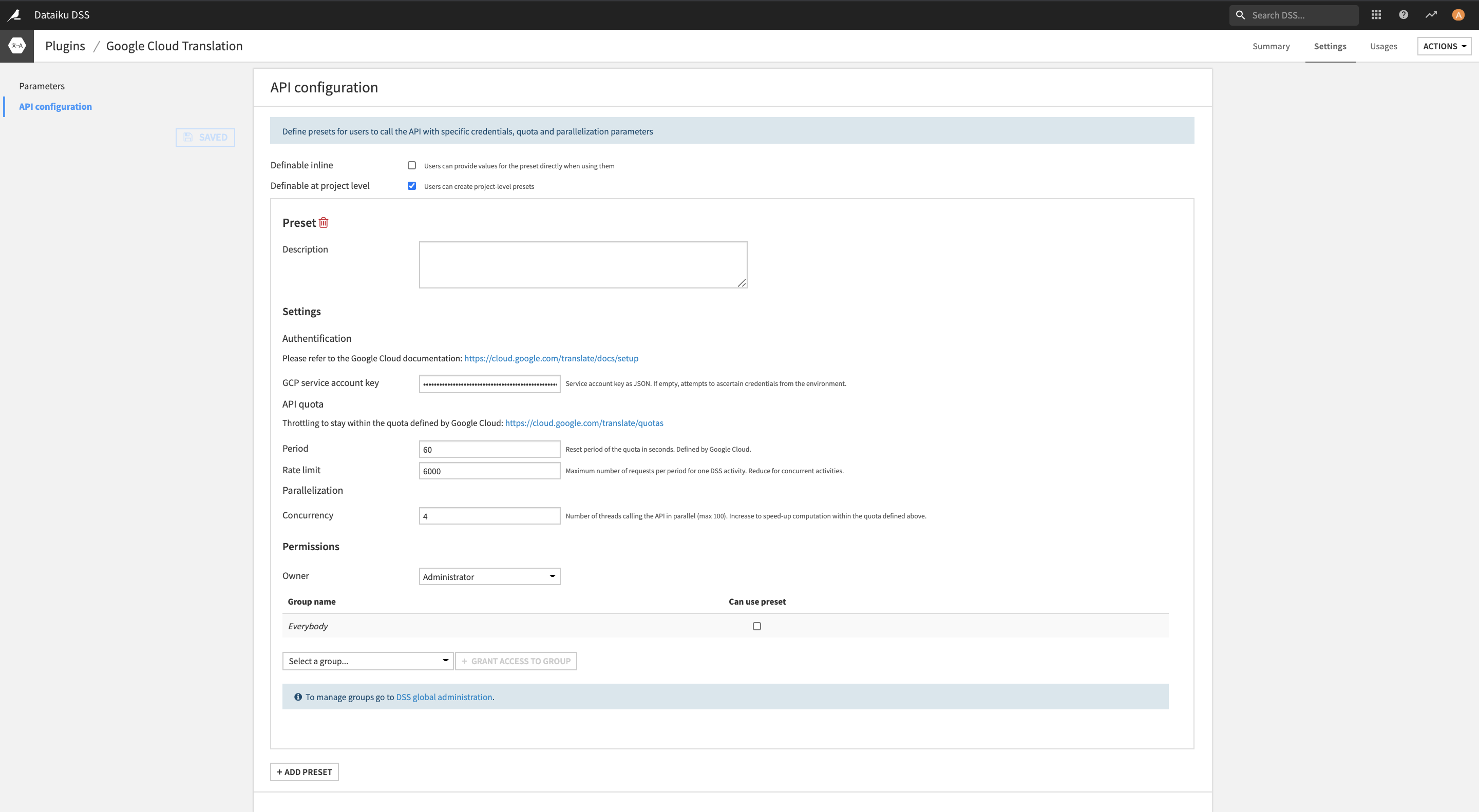

exception.ProcessException: Failed to receive messages from Email server: [ - A3 BAD Request is throttled. Suggested Backoff Time: 68448 milliseconds Suggested Backoff Time: 68448 milliseconds: .exception.ProcessException: Failed to receive messages from Email server: [ - A3 BAD Request is throttled. 11:00:00,286 ERROR o.a. ConsumeIMAP Failed to process session due to .exception.ProcessException: Failed to receive messages from Email server: [ - A3 BAD Request is throttled. 11:00:00,286 ERROR o.a. ConsumeIMAP Failed to receive messages from Email server: [ - A3 BAD Request is throttled. Please find the below error log for your references. We have been observing issues in the IMAP processor while consuming the email from office 365 email box. We are using an IMAP consumer processor in our nifi pipeline to read the email from office 365.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed